(this blog post is co-written by Monica Duke and Leo Mack)

The recent DCMI (Dublin Core Metadata Initiative) conference in Porto was collocated with the International Conference on Theory and Practice of Digital Libraries (TPDL), which gave the programme a rich mix of theory and practice. Many of the discussed topics had specific resonance with our current work at Jisc. Therefore, the conference was a great opportunity to discover the latest progress in these areas. The full conference proceedings are now available; some sessions are also documented with copies of presentation slides.

In this blog post, we report on three sessions that focused on particularly relevant themes: identify management using identifiers, expressing rights and licenses in human and machine friendly ways, and data management planning.

Image from DCMI website

Image from DCMI website

Expressing rights in research data

Slides from the Special Session

Delivered by Antoine Isaac, (Mark Matienzo, Michael Steidl)

This special session – Lightweight rights modeling and linked data publication for online cultural heritage – offered key insights into how the cultural heritage sector deals with the human and machine readable expression of rights for digital resources.

The parallels with the research data landscape are interesting. In the cultural heritage sector, the rights issue is complex and involves simplifying semantically and legally complex rights statements into something more intelligible and consistent, a process that has resulted in the excellent rightsstatements.org.

Perhaps the opposite is true in research data management, where often data is published with an attached licence that may or may not be machine readable, but contains not much other information around the rights, rights holders or conditions of access around the data. Even if some of this information is unavailable or inapplicable, the idea of creating meaningful, human readable statements about the conditions of use for data also has its place.

Work being carried out with Jisc by the University of Glasgow has gathered the perceptions of the UK academic sector about this challenging area, and is working on a set of outputs that can help both depositors and users of data better understand the opportunities and limitations offered by various licences. The attendance, engagement level, and standard of feedback being collected by the University of Glasgow from the three workshops so far clearly shows that this an area of interest and anxiety for many in the sector. Drawing inspiration from examples of innovation and establishment of best practice in a related sector seems to be appropriate first step.

Image from Twitter @taxobob

Image from Twitter @taxobob

PIDs in Dublin Core

Slides from the session

Co-ordinated by Paul Walk

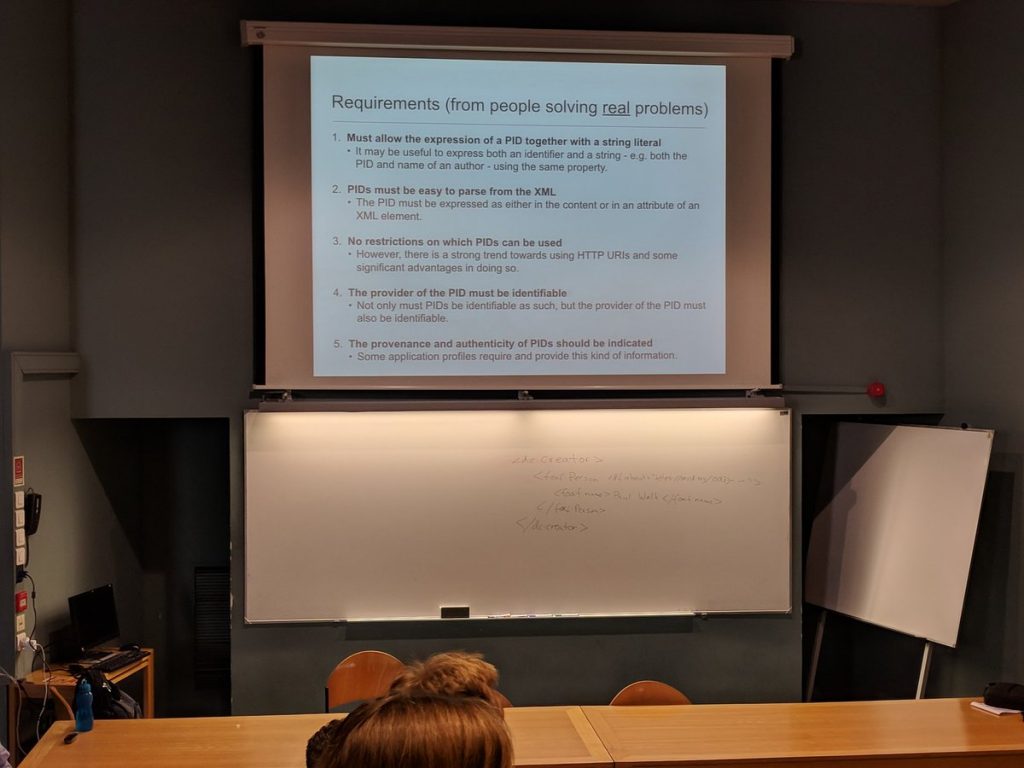

This session had the aim of considering how to provide Persistent Identifiers in metadata that uses Dublin Core vocabulary. This topic is of particular interest to Jisc, as the consortium lead working with the UK ORCID consortium member institutions. Through hackdays and other activity, the need to include ORCID IDs in metadata exposed by institutional repositories to downstream services has been highlighted. For example the eprints Advance ORCID plugin, which was released in 2018, includes the ORCID ID in a number of metadata exports. To date there had not been a clear recommendation or community agreed convention, so a meeting to reach community consensus was very welcome.

In the run up to the session the community was invited to submit use cases using Github. The submitted user stories can be viewed as issues. The requirements gathered in this way were then summed up in a paper prior to the session (also available in the Github repository). Jisc also submitted a user story describing requirements, based on the ORCID UK hackday community input.

The session considered a number of solutions against the requirements, whilst striving to stay faithful to some basic principles important to DCMI (e.g. simplicity, semantic interoperability). The proposed solution that the session agreed on will now be discussed more widely, with a suggestion to take it to PIDapalooza 2019. A report from the session is also being shared. In a nutshell, the solution proposes white-space-separated multiple identifiers (preferably http URIs) included in an id attribute within existing Dublin Core elements.

Image from DMP workshop collaborative notes document

Image from DMP workshop collaborative notes document

Machine-actionable Data Management Plans

Documentation from the session

Delivered by Tomasz Miksa (SBA Research) and João Cardoso (INESC-ID)

A third interesting workshop focussed on the idea of machine-actionable Data Management Plans (DMPs) and was delivered by Tomasz Miksa (SBA Research) and João Cardoso (INESC-ID). It built on the RDA working group for DMP Common Standards. In the past few years, it has become a common funding requirement for researchers to report which data their projects create, how they address potential sensitivity issues (e.g. due to privacy or other confidentiality constraints), and how they plan to manage and maintain data. Services such as the DCC’s DMP Online have become popular because they help users to write DMPs which meet the specific requirements of various funders.

However, the current implementation of DMPs as static documents also limits their utility – and possibly even contributes to their perception as just another administrative tasks. Instead, the vision presented at the workshop was that, in a world of Open Science, machine-actionable DMPs should become rich interactive resources, which help to facilitate and optimise data management throughout the entire research life cycle. Tomasz and João acknowledged that a human-centric narrative – and thus some form of document – will still be needed in that future. However, DMP documents would only be generated as a by-product of an advanced DMP system which could integrate directly with e.g. the data storage systems of institutions, reporting systems for finance/procurement offices, and the reporting back-ends of funders. What became clear during the workshop is that the implementation of DMPs depends on three interrelated pre-conditions: 1) a clear DMP policy (which formulates clear DMP requirements); 2) well-structured workflows (which reflect policy requirements and enable integrate with the system’s technical environment); and 3) a concise but comprehensive data model.

With regards to the last two aspects, the workshop included two group exercises and discussions. In the first group exercise, we discussed the potentials of modelling automated workflows, which would guide researchers through the various stages of formulating a DMP. This includes for example the specification of the type of data, storage requirements, licence, metadata standards, and repository deposit (you can find a handout here). One of the great potentials identified by participants was that a machine-actionable DMP workflows could directly integrate with various other systems such as repositories, data analytics tools, and possibly even financial auditing systems. This could for example facilitate the selection of DMP-compliant repositories or data formats and potentially even produce data on the cost of storage. But these interesting use cases also pose substantive practical challenges: Naturally, the integration and interoperability with a variety of technical systems is one. A more basic question is how the automated workflows of machine-actionable DMPs would integrate with other processes, such as ethics approval procedures, at institutions. Today’s DMPs document decisions taken via such “traditional” processes. Accordingly, there is an open question on how these processes would work with machine-actionable DMP systems which actively steer decision-making – instead of just documenting it.

The second group exercise focused on the proposed common data model for machine-actionable DMPs. The model aims to capture DMP-relevant data in five areas: Administrative Roles and Responsibilities; Data; Infrastructure; Security, Privacy, and Access Control; and Policies, Legal and Ethical Aspects. Workshop participants discussed various options to reuse existing vocabularies and entities, such as Dublin Core format options, as well as entire ontologies, such as the PROV-O ontology for provenance information. The main challenge, which requires ongoing work, is to identify and formulate general concepts that can reflect a variety of different use cases and cross-domain requirements. The documentation of access controls is one area where the data model could draw from the vocabulary used by RDSS, which defines five different access control levels: “open”, “safe-guarded” (requiring login), “restricted” (requiring submission of information on intended use), “controlled” (access control by dedicated body, such as data access committee), and “closed”.

Summary

With Jisc ORCID services already in operation, and with RDSS ready to launch in November, it is vital that we understand the latest thinking in related areas. In particular, we seek to include such emerging trends in the technology and services that we offer. Particularly in relation to identifiers and machine-actionability of policy documents (such as DMPs) this work has international scope, covered through our involvement in forums and projects such as RDA and EOSC. Let us know in the comments how these different areas impact on your day to day work with researchers and policy and strategy planning at your institutions.